robots.txt Rerouting on Development Servers

Every website should have a robots.txt file. Some bots hit sites so often that they slow down performance, other bots simply aren't desirable. robots.txt files can also be used to communicate sitemap location and limit request rate. It's important that the correct robots.txt file is served on development servers though, and that file is usually much different than your production robots.txt file. Here's a quick .htaccess snippet you can use to make that happen:

RewriteCond %{HTTP_HOST} devdomain

RewriteRule ^robots.txt$ robots-go-away.txt [L]

The robots-go-away.txt file most likely directs robots not to index anything, unless you want your dev server to be indexed for some reason (hint: you really don't want this).

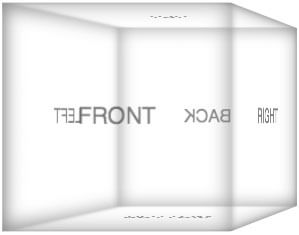

![Create a CSS Cube]()

CSS cubes really showcase what CSS has become over the years, evolving from simple color and dimension directives to a language capable of creating deep, creative visuals. Add animation and you've got something really neat. Unfortunately each CSS cube tutorial I've read is a bit...

![An Interview with Eric Meyer]()

Your early CSS books were instrumental in pushing my love for front end technologies. What was it about CSS that you fell in love with and drove you to write about it?

At first blush, it was the simplicity of it as compared to the table-and-spacer...

![AJAX For Evil: Spyjax with jQuery]()

Last year I wrote a popular post titled AJAX For Evil: Spyjax when I described a technique called "Spyjax":

Spyjax, as I know it, is taking information from the user's computer for your own use — specifically their browsing habits. By using CSS and JavaScript, I...

![Fixing sIFR Printing with CSS and MooTools]()

While I'm not a huge sIFR advocate I can understand its allure. A customer recently asked us to implement sIFR on their website but I ran into a problem: the sIFR headings wouldn't print because they were Flash objects. Here's how to fix...

Here’s an example showing how to include multiple development domains:

RewriteCond %{HTTP_HOST} ^localhost [OR] RewriteCond %{HTTP_HOST} ^example.dev [OR] RewriteCond %{HTTP_HOST} ^test.example.com [OR] RewriteCond %{HTTP_HOST} ^staging.example.com RewriteRule ^robots.txt$ robots-disallow.txt [L]use vagrant